Introduction: The Shift From Cloud AI to Edge AI

In 2026, the AI hardware landscape has fundamentally changed. For the past several years, most AI processing happened in cloud data centers—powerful but distant, requiring constant internet connectivity and raising privacy concerns. That era is now giving way to a new paradigm: Edge AI.

By mid-2026, inference workloads—the computational work of running AI models—account for approximately two-thirds of all AI compute, surpassing training for the first time. This “inference flip” is reshaping how hardware startups design and build their products. Instead of relying on cloud connectivity, smart devices are becoming intelligent on their own, processing data locally with unprecedented speed and privacy.

For hardware founders, this shift creates both opportunities and challenges. Products that leverage edge AI can offer real-time responsiveness, work offline, and protect user data in ways cloud-dependent devices cannot. But designing for edge AI requires different thinking—about chip selection, power management, thermal design, and product architecture.

This article explores what hardware founders need to know about edge AI in 2026, including market trends, design considerations, and practical guidance for building next-generation smart hardware products.

Why Edge AI Is Dominating in 2026

The Economics Have Changed

Cloud AI inference costs have become unsustainable for many applications. Industry analysis shows that organizations are reaching a cost tipping point where on-premises edge deployment becomes more economical than cloud services—typically when cloud costs exceed 60-70% of equivalent on-premises systems. For high-volume consumer products selling thousands or millions of units, the math becomes compelling.

A single training run for a state-of-the-art language model now consumes more electricity than a small city. While training remains a cloud-centric activity, inference—the ongoing, real-time work of running AI—has shifted toward edge deployment. This reflects practical needs:

- Latency reduction: Real-time applications like autonomous driving, industrial control, and interactive devices cannot tolerate round-trips to distant data centers

- Privacy compliance: Regulations in the EU, California, and elsewhere increasingly restrict how sensitive data can be transmitted and stored

- Reliability: Edge devices continue functioning during internet outages, network congestion, or in areas with limited connectivity

- Cost efficiency: Once hardware is deployed, edge inference has near-zero marginal cost compared to per-query cloud fees

Market Growth and Investment

The edge AI market is experiencing explosive growth. AI-enabled industrial PCs (AI Boxes) are projected to reach approximately 20% penetration by 2026, driven primarily by vertical applications in manufacturing, healthcare, transportation, and energy. The broader AI chip market is expected to reach $564.8 billion in 2026, with inference-optimized chips representing a significant and growing share.

Major chip manufacturers are responding accordingly. NVIDIA’s Rubin architecture, AMD’s MI400 series, and Google’s Ironwood TPU v7 all launch in H2 2026 with massive performance improvements optimized for inference workloads. More importantly, the proliferation of neural processing units (NPUs) in consumer devices—from smartphones to smart home products—means edge AI capabilities are becoming standard rather than premium features.

Key Trends Shaping Edge AI Hardware in 2026

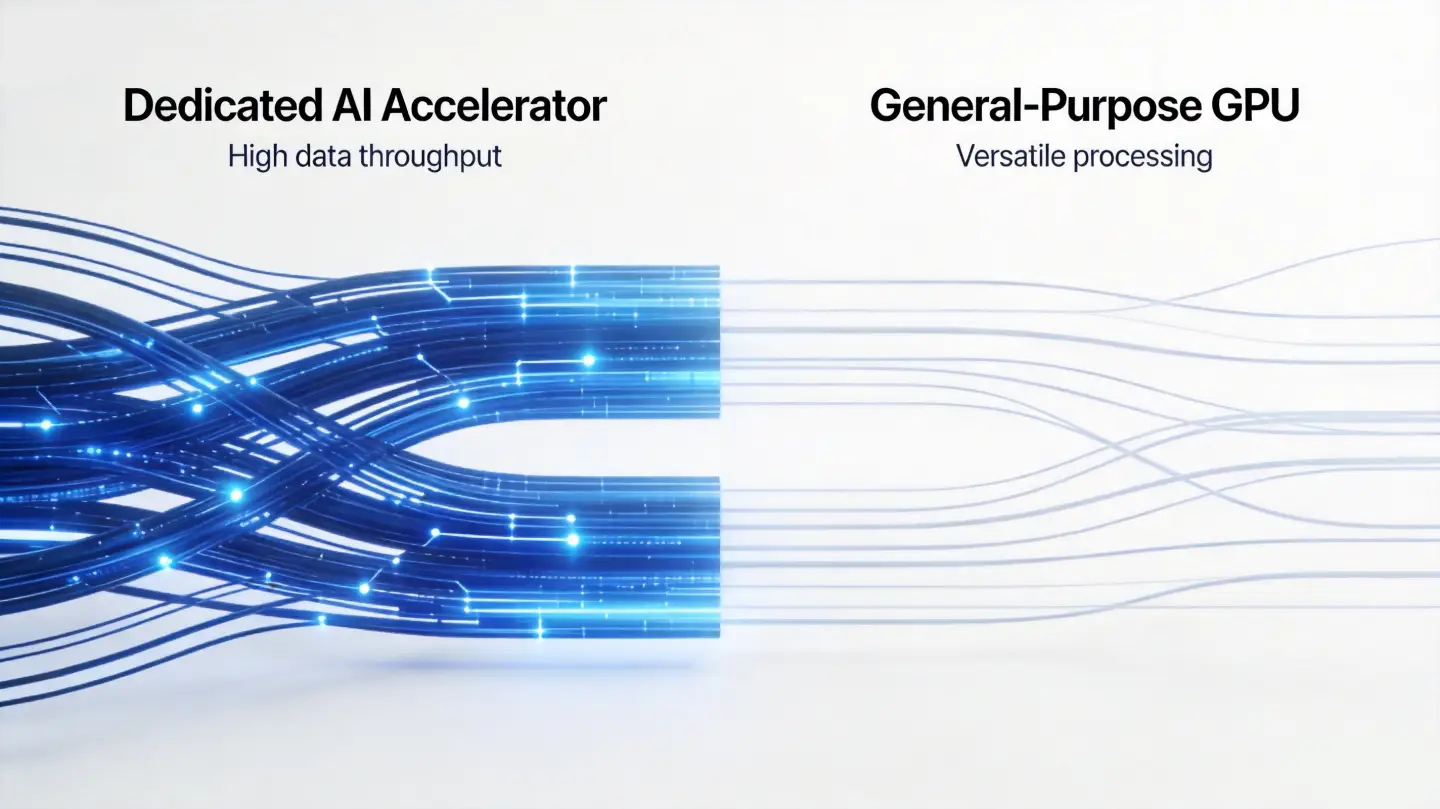

Trend 1: Specialized AI Accelerators Replacing General-Purpose GPUs

The era of using general-purpose GPUs for everything AI-related is ending. Custom ASICs (Application-Specific Integrated Circuits) optimized for AI inference are growing at 44.6% annually, compared to 16.1% for traditional GPUs. Cloud service providers’ in-house AI chips—Google’s TPUs, Amazon’s Trainium, Meta’s MTIA—are increasingly dominating inference workloads.

For hardware founders, this means:

- More chip choices: The market for inference-optimized chips has expanded dramatically, offering options for various power budgets and performance requirements

- Better cost-performance ratios: Specialized chips typically deliver better performance per dollar and per watt than general-purpose alternatives for specific inference tasks

- New vendor relationships: Working with AI chip startups and established players requires understanding their roadmaps and ecosystem strategies

Trend 2: HBM4 Memory Enabling Larger On-Device Models

High Bandwidth Memory 4 (HBM4) represents a generational leap in memory technology critical for edge AI:

- Bandwidth: Over 2TB/s per chip, approximately 60% faster than HBM3e

- Capacity: 16-24GB per stack with up to 16 layers

- Power efficiency: Approximately 40% more power-efficient than previous generations

This memory advancement directly enables larger AI models to run on edge devices. Where previous generations could only run pruned or quantized models, devices with HBM4 can run full-parameter models locally. This has profound implications for product capabilities—from voice assistants that understand complex context to cameras that recognize nuanced visual scenes without cloud processing.

Trend 3: AI Smart Glasses Emerging as Mass Market Products

AI smart glasses are transitioning from experimental devices to early mass-market products. Key developments in 2026:

- Maturity of components: Low-power chips, sensors, and optical modules now support scalable production

- Projected shipments: Global AI smart glasses shipments expected to reach approximately 9.5 million units by 2026

- Use cases emerging: Live navigation, real-time translation, floating notifications, and hands-free productivity are proving compelling

For hardware founders, AI glasses represent a new product category with significant growth potential. However, success requires balancing weight, battery life, display quality, and thermal management—a challenging engineering problem that rewards deep expertise in miniaturization and power optimization.

Trend 4: Health Wearables Advancing to Clinical Grade

Consumer health wearables are evolving beyond basic metrics toward clinical-grade monitoring:

- Continuous glucose monitoring becoming mainstream in non-diabetic populations

- Predictive health alerts for cardiovascular events, stress detection, and early illness signs

- Medical-grade sensors requiring regulatory clearance (FDA, CE) for claims

This trend creates opportunities for startups targeting preventive health and chronic condition management. However, clinical-grade accuracy requires careful sensor selection, rigorous validation protocols, and often regulatory engagement—considerations that extend timelines and increase development complexity.

Design Considerations for Edge AI Hardware

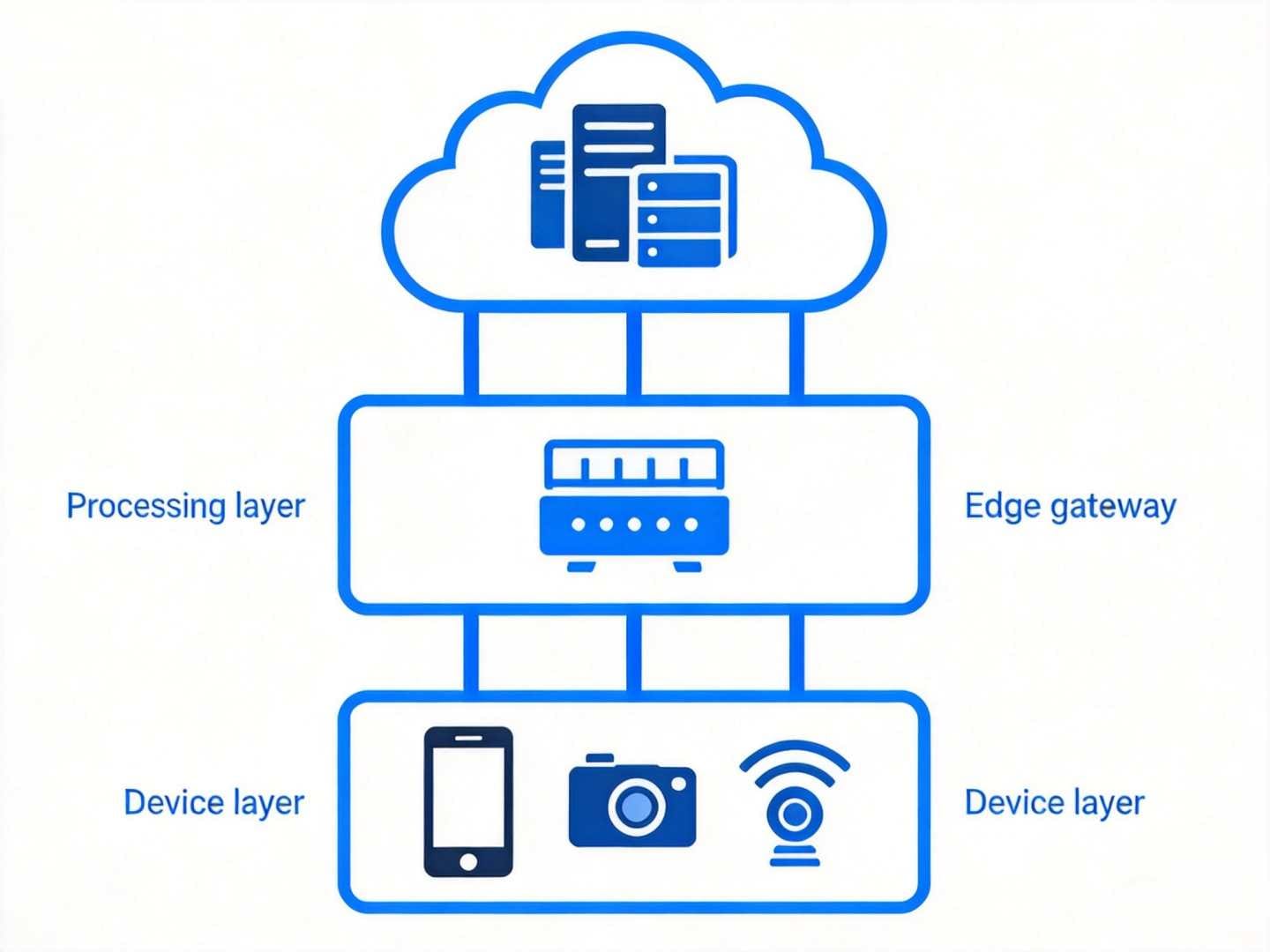

Hardware Architecture: The Three-Tier Model

Leading organizations in 2026 are deploying three-tier hybrid architectures:

- Cloud tier: For model training, updates, variable workloads, and burst capacity

- Edge gateway tier: Regional processing hubs for aggregation, pre-processing, and local model serving

- Device tier: On-device inference for latency-critical, privacy-sensitive, or offline-required tasks

For most hardware products, the question is not whether to use edge or cloud, but how to partition workloads across tiers effectively. This decision should be made early in architecture planning, as it affects chip selection, connectivity requirements, and software architecture.

Power Budget Management

Edge AI devices face strict power constraints that cloud servers do not. A standard CPU might require 100 watts to perform a trillion operations per second for AI tasks, while a specialized AI accelerator can do the same work at 10 watts. For battery-powered devices, this tenfold difference determines whether a product is feasible.

Key strategies for managing power budgets:

- Select inference-optimized chips: Custom AI accelerators consistently outperform general-purpose processors on performance-per-watt metrics

- Dynamic frequency scaling: Match processing power to workload intensity in real-time

- Sleep modes and wake-on-events: Minimize idle power consumption through aggressive power management

- Model optimization: Quantization, pruning, and knowledge distillation reduce computational requirements without proportional accuracy loss

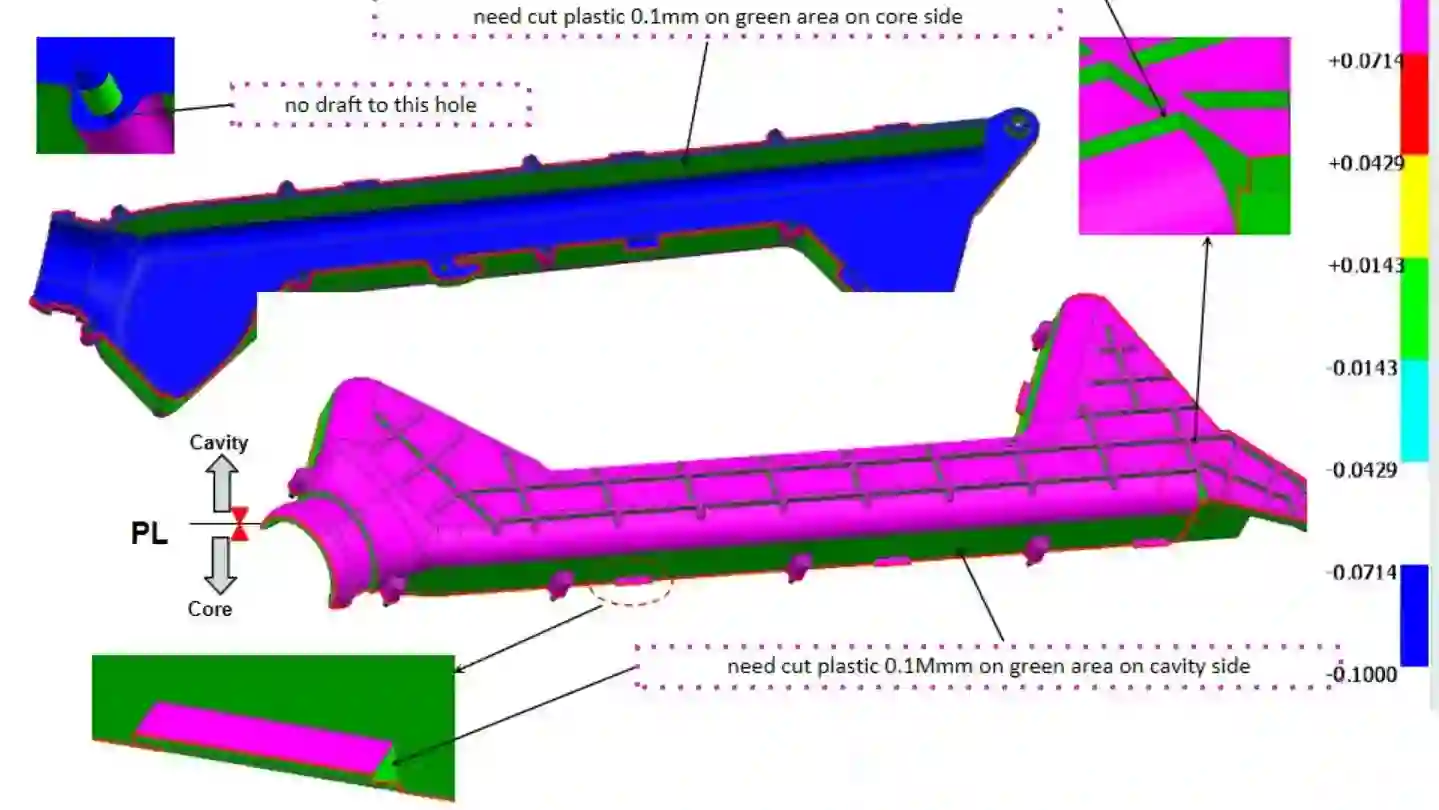

Thermal Design

AI processing generates significant heat. In cloud data centers, this is managed through elaborate cooling systems—liquid cooling, immersion, custom heat sinks. On edge devices, thermal design must work within consumer product form factors.

For hardware founders, thermal challenges manifest in several ways:

- Enclosure design: Heat must be conducted from internal components to external surfaces

- Material selection: Some materials conduct heat better than others; thermal interface materials (TIMs) bridge gaps between components and heat spreaders

- Active cooling: Fans or liquid cooling add complexity, noise, and failure modes

- User comfort: Wearables and handheld devices cannot exceed temperatures that cause user discomfort

Early thermal simulation and prototyping can identify issues before major investments in tooling.

Connectivity and Update Strategy

Edge AI products require thoughtful connectivity design:

- Over-the-air (OTA) updates: Essential for fixing bugs, adding features, and updating AI models post-launch

- Bandwidth management: Model updates can be large; delta updates and compression reduce transmission requirements

- Offline capability: Products must continue functioning during connectivity gaps

- Security: OTA updates require secure boot, encrypted transmission, and authentication mechanisms

Planning for product lifecycle management—including update infrastructure, rollback capabilities, and end-of-support policies—should begin during initial design, not as an afterthought.

How a Hardware Development Partner Can Help

Building edge AI hardware requires expertise spanning multiple domains: embedded systems, AI/ML integration, thermal engineering, regulatory compliance, and manufacturing at scale. Few startups have all these capabilities in-house.

A full-service product development partner can provide:

- Cross-disciplinary expertise: Industrial designers, mechanical engineers, hardware engineers, and software developers working collaboratively from concept through production

- Component sourcing relationships: Access to AI chips, sensors, and components through established supply chains

- Manufacturing network: Connections to proto shops and injection molders experienced with edge AI hardware

- Regulatory experience: Knowledge of EMC/EMI requirements, safety standards, and wireless certification processes

At OPD Design, we’ve helped hardware founders navigate the transition to edge AI, from selecting appropriate chipsets to designing thermal management systems and preparing for regulatory approval. Our end-to-end capabilities—from initial concept through mass production—allow founders to maintain continuity through the development journey.

Frequently Asked Questions

What is the difference between cloud AI and edge AI?

Cloud AI processes data in remote data centers, requiring internet connectivity and transmitting data to/from the cloud. Edge AI processes data locally on the device itself, reducing latency, enabling offline operation, and improving privacy. Edge AI is typically used for real-time inference tasks, while cloud AI remains important for model training and complex batch processing.

Why is edge AI becoming more popular in 2026?

Several factors drive edge AI adoption: improved cost economics as inference volumes grow, stricter data privacy regulations, latency requirements for real-time applications, and significant chip improvements enabling larger AI models on smaller devices. The “inference flip”—where inference workloads now exceed training workloads—has accelerated investment in inference-optimized edge hardware.

How do I choose the right AI chip for my edge device?

Consider these factors: power budget (battery vs. mains powered), performance requirements (what AI tasks need to run?), connectivity constraints (offline vs. connected operation), form factor (wearable vs. stationary device), and cost targets. Inference-optimized chips from companies like NVIDIA, AMD, Qualcomm, and specialized AI chip startups offer different trade-offs. A development partner can help evaluate options against your specific requirements.

What are the main challenges in designing edge AI hardware?

Key challenges include thermal management (dissipating heat in consumer form factors), power optimization (extending battery life while running AI workloads), model optimization (fitting models into device memory and processing constraints), and regulatory compliance (especially for health-related applications). Successfully navigating these challenges requires multidisciplinary expertise and early planning.

Do edge AI products still need cloud connectivity?

Most edge AI products include some cloud connectivity for updates, backup, and supplementary processing. However, core functionality should work offline. The best products intelligently balance local and cloud processing based on task requirements, network availability, and user preferences. Designing this partitioning requires careful architecture planning early in development.

How does edge AI affect product development timelines?

Edge AI adds complexity to hardware development, potentially extending timelines by 2-4 months compared to non-AI products. Additional considerations include AI model selection and optimization, thermal testing, power budget validation, and potentially regulatory approval for health-related features. However, this investment often creates significant competitive differentiation and enables premium pricing.

Conclusion: The Edge AI Opportunity for Hardware Founders

2026 marks an inflection point for edge AI in consumer and industrial products. The technology has matured, components are readily available, and market demand is accelerating. For hardware founders, the question is no longer whether to leverage AI—it’s how to do so effectively.

Success in edge AI hardware requires thoughtful integration of multiple engineering disciplines, careful component selection, and realistic planning for the challenges of thermal management, power optimization, and productization. The investment is significant, but the competitive advantages—superior user experience, offline reliability, privacy protection, and lower operating costs—create durable market positions.

Whether you’re developing next-generation smart wearables, industrial AIoT devices, or consumer robots, the edge AI revolution offers unprecedented opportunities for hardware founders willing to master its unique challenges.

Need help bringing your edge AI hardware to life? Contact OPD Design to discuss your project requirements.